Have you ever stared into a harshly lit bathroom, caught a glimpse of yourself at the worst possible angle, and thought, this thing must be defective? Maybe the lighting is off. No, the glass must be warped. You should sue whoever made it for emotional distress.

Absurd, right? And yet, that’s exactly how we’ve begun to treat social media.

The jury verdict against Meta and YouTube this week has been heralded as a turning point in the fight against Big Tech. A California jury awarded millions in damages to a young woman who argued that social media platforms harmed her mental health through addictive design and algorithmic amplification. The companies, unsurprisingly, have already signaled they will appeal. And they should — because while the facts of the case are tragic, the theory underpinning the verdict is dangerously flawed.

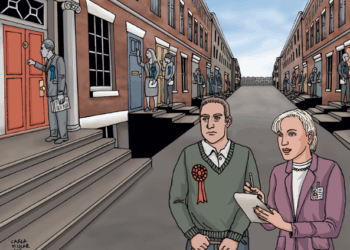

Social media algorithms show you what you want to see. Sometimes, what we want to see isn’t so pretty…

Anyone who knows how social media algorithms work will understand that the plaintiff wasn’t a victim of social media. She was a victim of herself. Social media algorithms show you what you want to see. Sometimes, what we want to see isn’t so pretty, especially when we are in a state of hurt and trauma. We search for what validates our worldview and situation. (RELATED: Suing Social Media Won’t Save the Children — But It Could Silence Everyone)

It’s not always sinister. Teen girls will interact with content about their favorite pop star, and social media will recommend similar content to what they seek out. Similarly, boys interested in sports will receive highlights from last night’s big game. But sometimes, this delivery system becomes sinister. Anorexics will look for pictures of girls starving themselves, and the algorithm will feed them. Uncomfortable teen girls going through puberty will search for reasons why they feel weird in their developing bodies, and next thing you know, they want a double mastectomy and testosterone shots before they can vote.

The uncomfortable truth at the heart of this case is that algorithms are mirrors more than they are manipulators. In fact, we are manipulating algorithms through our online activity, whether we understand this or not. The content we see reflects our desires — sometimes healthy, often not. Blaming the mirror for what it reflects is emotionally satisfying, yes, but intellectually dishonest.

This does not mean social media companies are blameless. Big Tech pushes features like infinite scroll, autoplay, and recommendation engines to garner maximum engagement. The more time you spend on Instagram or YouTube, the more ads you will see. The more ads you see, the more money digital platforms make. Engagement is not mind control. Users choose to spend more time online, no matter how intense the influence that persuades them. Will watching a video of a thin woman modeling revealing clothes make you insecure about your own body? Well, you could always click off of the video, even if it “just popped up” next. Try turning off your phone. (RELATED: How COVID Created the 15 Second Generation)

But oftentimes, we don’t want to. Strangely enough, young Americans, who increasingly struggle with an array of diagnosable mental health problems, lack the agency to click away from content that exacerbates their issues. In our country, there is a prevailing attitude that we are patients of our pain rather than participants. It is the idea that external forces are always victimizing our fragile selves, and that we have no power to resist these forces to take part in our own self-improvement.

Social media algorithms do not impose desires onto users; they refine and amplify desires that already exist. Artificial intelligence (AI), like social media algorithms, is fundamentally reactive. It learns from and responds to user input. When that input is dark, disordered, or self-destructive, the output can be as well.

Will paying a plaintiff a couple million dollars really stop the human pull of seeking out products that exacerbate psychological pain?

Social media algorithms are AI. Because they don’t discriminate, they allow us to destroy our own brains. That may sound harsh, but it captures a fundamental reality of business: these systems — these products — optimize for engagement, not truth, health, or virtue.

The legal system is now being asked to draw lines it is poorly equipped to handle. Section 230 has long shielded digital platforms from liability for user-generated content, but plaintiffs are now reframing these cases as product liability claims focused on design rather than speech.

Meta and Google will appeal this verdict, and higher courts will almost certainly take a more skeptical view of the claims than a California jury. It is not hard to imagine the issue reaching the U.S. Supreme Court within the next few years. When it does, the justices will face a defining question of the digital age: To what extent are individuals responsible for their own consumption of algorithmically curated content?

America’s ethos depends on the assumption that individuals are capable of making choices — even bad ones — and bearing the consequences. If we abandon that issue in the digital world, we will not solve the problem of technological harm.

If the supply of personalized social media algorithms wasn’t fulfilling some psychological demand, Big Tech companies would not be as rich as they are today. Why is it that so many Americans feel anxious, depressed, and out of control? That is the question we should be asking.

Will paying a plaintiff a couple million dollars really stop the human pull of seeking out products that exacerbate psychological pain? Imagine this precedent loses its appeal and opens the door to infinite lawsuits by psychologically damaged plaintiffs. When Meta and Google are bankrupt, who will stop the next company from formulating a product that tickles the same masochistic itch?

Americans must reject the belief that we are sheep to the slaughter. You are not powerless. Your feelings are not always fact. Your own habits shape your life more than any algorithm ever could. Until we are willing to say that, no verdict, no regulation, and no lawsuit will fix what is actually broken.

READ MORE from Julianna Frieman:

The Epstein Effect: Men, Women, and the Spectacle of Scandal

How COVID Created the 15 Second Generation

Suing Social Media Won’t Save the Children — But It Could Silence Everyone

Julianna Frieman is a writer who covers culture, technology, and civilization. She has an M.A. in Communications (Digital Strategy) from the University of Florida and a B.A. in Political Science from UNC Charlotte. Her work has been published by the The American Spectator and The Federalist. Follow her on X at @juliannafrieman. Find her on Substack at juliannafrieman.substack.com.

![James Carville Admits Democrats Had No Shutdown Endgame, Mishandled Strategy [WATCH]](https://www.right2024.com/wp-content/uploads/2025/11/1763070634_James-Carville-Admits-Democrats-Had-No-Shutdown-Endgame-Mishandled-Strategy-350x250.jpg)